The geometry of rules (or how to "game the system")

The way players cheese games reveals how markets, networks, and other systems are exploited.

My goal with Misaligned Markets is to understand how markets incentivize behaviors that lead to market failure. While I’ve leaned on analogies like corporate kung fu and capitalism as a computer system, I want to isolate patterns that work at a more granular level. To that end, have started thinking about games, sports, and other competitive activities. This has helped me understand how rules in role-based systems can be abused to create action asymmetries that allow an actor to take actions others cannot. This idea is core to the conception of market power I’m working toward with this project.

It’s not just a game

The study of games is a broad area, but arguably, few works have been as influential as Homo Ludens by Dutch cultural theorist Johan Huizinga. Core to Huizinga’s thesis is the concept of the magic circle. For Huizinga and the theorists who came after him, many domains of human interest—from the free play of children, to religious rituals and competitive sport—function as little slices of reality carved out from the rest of the world. These are, in effect, sacred spaces with their own rules where actions take on meanings defined by the activity. Though Huizinga coined the term, he rarely used it, with later cultural theorists of games and play being the ones to elaborate on this idea in the 2000s and 2010s as online games and augmented reality experiences grew in popularity.

Human activity as imagined worlds

The magic circle describes how people collectively step into imagined worlds governed by unique logics. The circle keeps ordinary reality at bay while granting special meaning to what happens inside it. For instance, a game‑winning three‑pointer matters profoundly in an NBA Finals match but not during a casual practice or a child’s playground game. This framing extends well beyond sports. Workplaces, classrooms, and religious ceremonies all function as self‑contained worlds, each with its own expectations and codes of behavior that may operate independently of what’s happening outside their boundaries.

The most interesting aspect of this literature is how it has evolved from Huizinga’s initial concept. Modern usage of the term was invoked to describe virtual worlds like Second Life, Ultima Online, Warcraft, and other Massive Multiplayer Online games from the late 1990s and early 2000s. As theorists and scholars pointed out, the defining feature of these games is the ways in which activities in-game affect the real world and vice versa. For example, a game developer might change a feature, like the in-game economy, in response to whether players on average are having too easy an experience. In a similar vein, players may come to value in-game assets so much that they’re willing to pay real money for them. Well before blockchain, bitcoin, or Axie Infinity, games like EVE Online had detailed economies involving the exchange of real currencies.

For this reason, some games scholars have urged that the field move away from the magic circle. However, there is a basic intuition that the circle retains, even if there are domains that aren’t perfectly encapsulated and isolated from the broader world. Edward Castronova, in his 2005 book Synthetic Worlds: The Business and Culture of Online Games captures this well. In chapter 6 titled The Almost-Magic Circle, he writes:

The synthetic world is an organism surrounded by a barrier. Within the barrier, life proceeds according to all kinds of fantasy rules involving space flight, fireballs, invisibility, and so on. Outside the barrier, life proceeds according to the ordinary rules. The membrane is the “magic circle” within which the rules are different (Huizinga 1938/1950). The membrane can be considered a shield of sorts, protecting the fantasy world from the outside world. The inner world needs defining and protecting because it is necessary that everyone who goes there adhere to the different set of rules. In the case of synthetic worlds, however, this membrane is actually quite porous. Indeed it cannot be sealed completely; people are crossing it all the time in both directions, carrying their behavioral assumptions and attitudes with them. As a result, the valuation of things in cyberspace becomes enmeshed in the valuation of things outside cyberspace.

Castronova’s almost-magic circle reflects the notion that many human endeavors function like distinct spaces with bounded rules, but that there’s no way to completely cordon them off from the world. What makes these domains function, broadly speaking, is that they are shared fictions. Most participants in these spaces benefit from their continued existence and thus submit to the rules and norms governing them. But when shared fiction and investment aren’t enough, enforcement exists to correct unintended or harmful behaviors.

Governing the (almost) magic circle

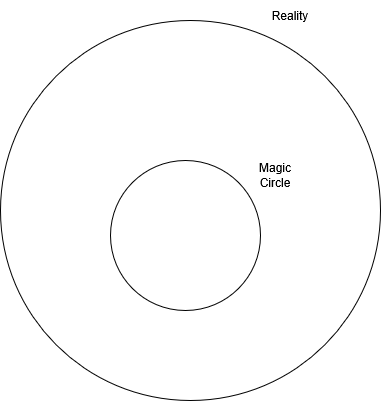

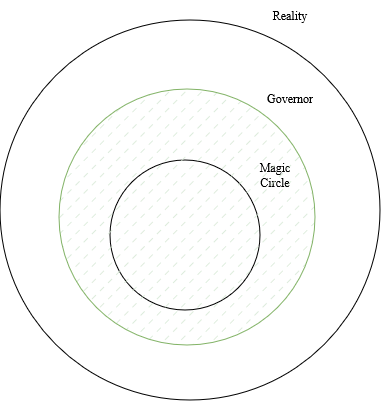

What I like about the almost-magic circle metaphor is that it gives us geometry we can loosely play with to illustrate different types of failures in a bounded domain. I’m going to put my own systems theory/cybernetic twist by establishing some ground truths that will allow us to view a magic circle like a rulemaker:

- Let the world beyond the magic circle, or reality (defined as R), be represented as a circle containing the set of feasible actions that a person can take. If you can imagine someone doing it, then it’s an action contained within R. However, R doesn’t just have to contain actions, with this being a simplifying assumption for now.

- Every magic circle (defined as C) exists within R. C contains a subset of domain-relevant actions, from R, as understood within the current implementation of the rules governing a given magic circle.

- Actors operate from R, even while participating in C, so they’re capable of choosing any action from R or C. However, this assumption will be removed in cases that we’ll discuss later.

- Outside every magic circle we can imagine there’s a “governor” (defined as G) that uses rules to evaluate actors’ actions for compliance with domain-relevant or approved actions in C. Governors are my metaphor for the total combination of norms, rules, rule makers, and regulators that define and enforce the permissible action space in C.[1]1

- Enforcement of actions in C is bounded by the governor’s ability to detect violations. For reasons we’ll cover throughout this post, governors have a predictably imperfect view of the world that creates blind spots in most domains. Furthermore, because enforcement isn’t always free or easy, it sometimes doesn’t occur until after a violation has already happened.

Hiding from the governor’s gaze

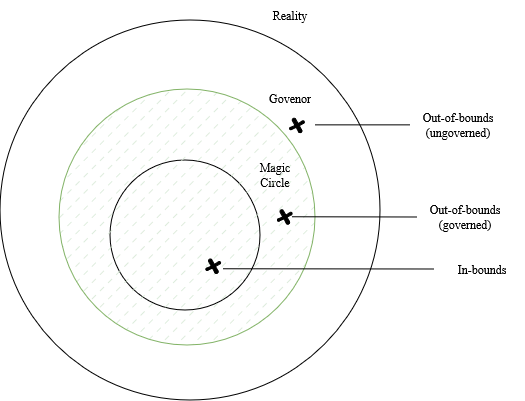

Participants’ compliance with the rules of a magic circle, as well as a governor’s enforcement of those rules, is what allows domains to function as distinct worlds. However, actors looking for an edge within the circle are incentivized to take actions that provide advantages others can’t access. This isn’t cheating, but rather involves exploiting the gap between how an activity is evaluated and how it unfolds in reality. In doing so, actors induce the system to generate valid advantages, and over time, these behaviors come to reshape the entire domain. Essentially, these exploits enable actors to “hide” in a governor’s blind spots. There are broadly three ways of doing so.

Governor failure mode 1: Out-of-bounds exploits

In a governed system, out-of-bounds exploits refer to advantageous actions or states that rely on criteria the rules do not or cannot regulate.

Examples: doping (historically), password sharing, information asymmetry

The first type of exploit of this nature is the out-of-bounds exploit where an actor discovers how to take an action outside C without the governor responding. This failure mode exists whenever there’s an action or attribute relevant to C that the rules are silent about. The actor isn’t necessarily concealing their behavior; instead, the governor simply isn’t equipped to recognize it. Essentially, a system can’t catch what it’s not designed to monitor.

The history of performance enhancement in competitive sports is a great example. Purportedly, this goes back to the earliest Olympic Games, if not much earlier. It’s not surprising how ancient a tradition doping is, as it takes place “outside” the circle, and its effects are hard to perceive through the categories players are judged by.

Once an exploit is discovered, others adopt it, at least until regulation catches up. Once that happens, a governor can then decide to close a loophole by expanding detection capacity.[2]2 In modern sports, doping is seen as anti-competitive, so it’s policed, although various sports leagues differ on the implementation and the degree of anti-doping policies. A common complaint of the NBA by some fans and other sports governing bodies, for example, is that it’s too lax with testing. Whether this is true doesn’t matter. This highlights that governors have a lot of flexibility in how they do (or don’t) regulate an out-of-bounds exploit.

Why might a governor choose not to close some loopholes? Sometimes it just makes for a better activity! For much of modern Olympic skiing’s history, teams were allowed to experiment with different types of waxes that helped reduce friction and increase speed. Waxing is so important that teams have invested in entire “waxing cabins” housing technicians who’ve developed proprietary application techniques. While the Olympic committee recently banned waxes based on per- and polyfluoroalkyl substances known as PFAS or “forever chemicals” (thanks, DuPont!), this was solely for environmental reasons.

When a governor does choose to regulate a circle, they might face resistance. Take, for instance, this year’s alleged penisgate scandal in ski jumping, which involved skiers injecting hyaluronic acid into their genitals and stuffing their underwear to make their crotch area bigger. The International Ski and Snowboard Federation (FIS) regulates ski suit size for player safety, with skiers requiring near-tailor fit ski wear. However, teams have increasingly found ways around this because even marginal increases in suit surface area improve aerodynamics. Faking junk size to get larger suit measurements is just the latest strategy in this cat-and-mouse game. In other instances, resistance may not come from participants but from spectators. In basketball, for example, the slam dunk went from being ungoverned, to being banned, to becoming a core part of basketball because fans loved it.

Governor failure mode 2: Payoff fuzzing

Payoff fuzzing involves an actor searching for decision boundaries in a governed system. These are places where small or hard-to-detect differences significantly alter how a governor classifies an action or state. The goal is to sit on the favorable side of enforcement or push others toward sharper penalties.

Examples: flopping (basketball, soccer), gerrymandering, patent trolling

Out-of-bounds exploits are pretty intuitive to understand because they take place outside the circle or beyond a governor’s detection radius. However, since governors only see the world through their interpretation of the rules, blind spots exist inside the circle, too.

Payoff fuzzing allows actors to exploit the divergence between a rule’s punishment/reward structure and the actual behavior it was intended to produce. I’m borrowing the term fuzzing from cybersecurity, which refers to systematically probing a system with unusual or edge-case inputs in order to map its behavior to learn where vulnerabilities lie. A security admin might test how a program designed to store numbers responds to an assortment of inputs like letters, decimals, negative values, large numbers, random characters, or malformed strings. The goal isn’t to break the program, but to see which behaviors induce unexpected outcomes that could be taken advantage of by a hacker.

In games and sports, players fuzz the boundary of enforcement by testing borderline‑valid actions to see how the governor interprets them. Through trial and observation, they learn which gestures, timings, or framings tilt rulings in their favor. The exploit comes not from violating the rules but from understanding their interpretive texture. This can be thought of as a search through the space of actions that resemble governed actions but bias the governor to act in a player’s favor.

The clearest example of this is tactical fouling (sometimes called professional or intentional fouls, depending on the sport). Fouls are intended to penalize inappropriate contact in games like basketball, football, and soccer.[3]3 However, players have learned how to “fuzz” the boundary of what a governor considers a foul by strategically choosing when and how to initiate contact with the rival team. For example, defenders may choose to commit so-called good fouls, because the payoff of initiating a foul is preferable to the loss of allowing the game to proceed as is. Similarly, behaviors like flopping attempt to manipulate how an interaction is categorized by turning ambiguous or even non-contact into a ruling that provides a payoff.[4]4 Knowledge about fuzzing via flopping is so common that it’s been parodied relentlessly in popular culture:

Regardless of intention, the exploit here doesn’t come from the rule being unclear, but rather that the governor must make categorical decisions (foul/no foul, flagrant/common) that result in payoff cliffs where small differences in behavior can trigger drastic changes in rulings.

The evolution of fouls within the NBA is pretty fascinating when viewed from this perspective. While many actions can be classified as fouls, the strategic logic behind each varies. Some fouling strategies, like the Hack-a-Shaq (or other player), are opponent-directed, using penalties to impose costs on rivals whose weaknesses are known. Others, like foul baiting, are self-directed, aiming to extract positive payoff by converting borderline interactions into favorable rulings. Both cases, however, involve exploiting the difference in what fouls penalize and what they were meant to incentivize.

Gaming higher-abstraction systems

In domains like sports, it’s easy to imagine a governor tracking physical actions. However, magic circles and governors can be used to describe abstract domains like law, markets, and computer networks. These are governed by abstraction classes that bind actions, system states, and other types of constructed representations. Once governance operates on representations, it can no longer directly observe underlying actors, actions, or states. That complexity introduces a distinct failure mode stemming from the substitution or collapse of a representation from the reality it’s intended to govern.

To illustrate this idea, consider a user account assigned to someone named Mike on a computer network. A governor[5]5 only ever “sees” the account, not the person using it. Therefore, there is no way to ensure that “Mike” is actually the person logging into the account. More advanced security measures might try to tie the account to something physical like a phone, biometrics, a key fob, or some other dongle, but these can be stolen. Should that happen, the thief can take any valid action Mike can, without penalty. If Mike is a superadmin with full system access, then taking over Mike’s account is the best way for someone who lacks those capabilities to gain them (generally referred to as privilege escalation). This type of blind spot tends not to exist in physical domains. While sports have roles and classes, there’s generally no inherent exploitable ambiguity that these create. I can’t hire LeBron James to sub for me in a pickup game, nor is there some other way for me to smuggle LeBron’s talent onto the court.[6]6

Governor failure mode 3: Abstraction failure

An abstraction failure exists when a governed system makes decisions using abstractions that do not preserve the structure of reality they are meant to represent. This can occur either when relevant aspects of reality are not encoded in the abstraction or when distinct realities are mapped to the same abstraction.

Examples: patent trolling, shell companies, mis-scoped OAuth token

I call the core failure mode that high-abstraction domains introduce an abstraction failure. This occurs when the “gap” between a constructed abstraction and the thing it is meant to represent becomes misaligned enough to exploit. The failure is one of representation: the governor evaluates actions based on abstractions that either collapse meaningful distinctions or omit context necessary to interpret them correctly.

For example, patent trolls exploit interpretive slack in patent claims, as claims written in a particular way can make it costly for others to work on distinct but vaguely related implementations. The abstraction (a patent in this case) can be interpreted more broadly than it should, which an actor can then use to fuzz the legal patent enforcement boundary. They can essentially threaten litigation against many adjacent targets until settling with the troll is the least costly option.

While fuzzing and abstraction failure are different, there is a similar geometry. Both involve blind spots created by how a governor maps actions and relationships within the circle. Fuzzing is a search process that probes the governor’s response surface. Alternatively, abstraction failure describes a condition where the mapping between reality and representation either collapses distinct states into the same abstraction or fails to encode distinctions that matter for governance. Both cases create degrees of freedom that allow an actor to steer a governor’s behavior.

Not all abstraction failures arise from ambiguity or multiple interpretations. Some occur because the abstraction itself lacks the attributes required to map correctly to its intended use in reality. In these cases, the issue is not that the governor cannot choose between interpretations, but that the representation does not encode the dimensions needed to make the correct distinction at all. I’ll illustrate this idea with authentication tokens.

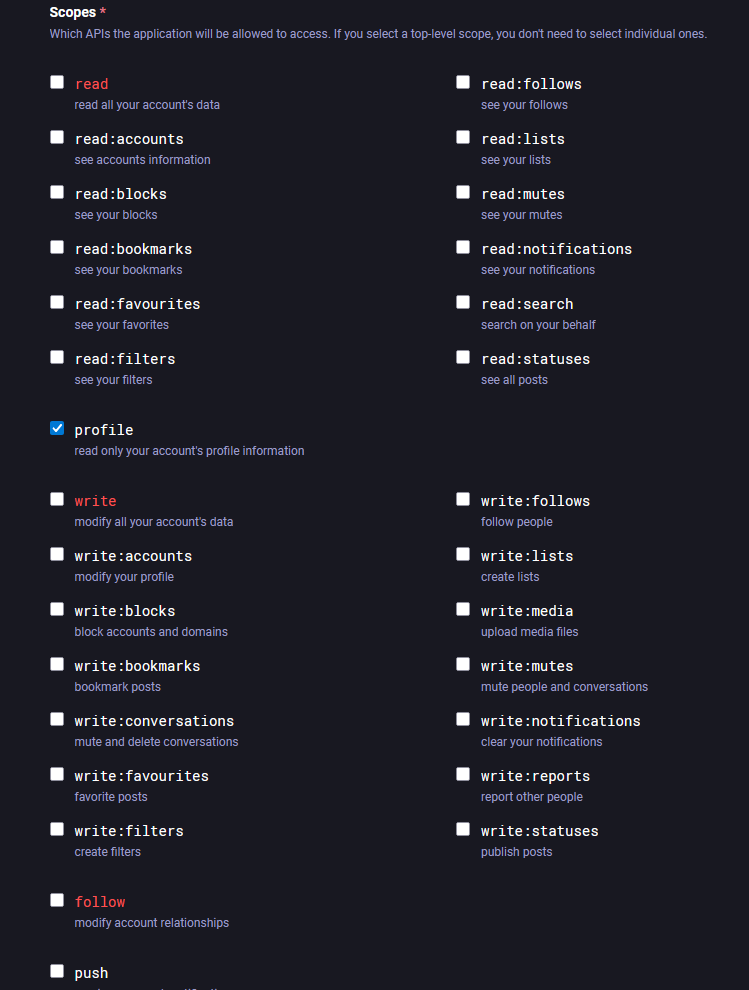

Some programs allow users to use access tokens to connect to a service. These tokens enable remote access or allow a second program to act on behalf of the user, without sharing the user’s actual credentials. They kind of function like visitors badges in the real world. All access tokens must be scoped, which generally entails a user selecting what permissions a token allows its bearer to have. Can a user with the token only read data in the program? Can they write data? Delete it?

It’s standard practice to only scope the minimum necessary permissions required for a user to do their job. If a program only needs to read data, don’t give it a token with write permissions. This is known as the principle of least privilege (PoLP) and is one of the most important practices in cybersecurity. Practicing PoLP means making sure the abstraction, a token in this case, actually matches the reality of what the intended use is. A token that encodes permissions without the context of how, when, or why it should be used creates a representational gap. The governor treats the token as a sufficient representation of user intent, even though that intent is not actually encoded in the abstraction.

I consider these distortions introduced by abstractions as “negative affordances.” An affordance is a perceived possible action that an environment offers an agent—for example, a cup handle affords grasping. Negative affordances are the inverse. These are gaps in a representation that naturally guide actors toward exploitative behaviors.

So, what’s the point of this?

The guiding insight that led to Misaligned Markets was that market failure is extremely profitable and likely very common in markets. Most of my posts so far have been on the cultural, political, and economic incentives driving market failure. With this post, I’m using cases I’m familiar with to generalize the affordances an actor can use to “hide” market failure-inducing behaviors. This isn’t meant to be a complete descriptive ontology; the goal is to build intuitions that will let me reason about hiding places in more complex environments like financial markets.

Part of what led me here was my background in cybersecurity; in a lot of ways, what I’ve been doing this entire time is importing security thinking into economics. Security experts understand very well how the rules that govern a system can become an attack surface for hackers to use to their advantage. While this is not a mature framework, I’m publishing it because I’m committed to thinking out loud about my ideas to share my intellectual journey.

What exactly is this exploring?

In many of my earlier blog posts, you may recall that I used the adjective “lossy” to describe representations in capitalism. I’ve been reflecting on this idea a lot, as markets are constructed systems governed by abstractions via a process I call capitalist serialization. This is the process by which market capitalism compresses the messy social and material world into constructed abstractions like price, risk, liabilities, etc.

Recently, I realized that the lossy nature of market abstractions is very loosely analogous to various types of incompleteness in formal systems.[7]7 A market, a legal code, or a digital platform likewise can’t represent every state of the world it acts on. Gaps between representation and reality become negative affordances, or zones of discretion, that let participants decide how to game the system. If you’re familiar with sayings like “all models are wrong...” or “the map is not the territory,” then you might understand what I’m gesturing at. My interest is in finding all the spots in the territory that make for good ambushing spots because they’re not referenced on the map.

The interesting thing is that this phenomenon isn’t limited to economies or games. Language, law, and cognition all depend on representations that only partially correspond to what they denote. Philosophical thought experiments to Descartes’ Evil Demon to stories like the Matrix, indirectly touch on this via the idea that sensory experiences are representations distinct from the underlying reality they represent. However, my idea examines representational ambiguity not as an epistemic problem, but as a structural one, given the rules of a system.

Is there already literature on this?

Many scholars have explored how abstraction affects governance, and I’m currently building a list for a future book club. James Scott, for example, talks about how governments struggle to represent local knowledge through bureaucratic knowledge in “Seeing Like a State.” I’m also vaguely aware that post-structuralist Gilles Deleuze,[8]8 with his co-author Felix Guattari, built an entire mode of analysis around how capitalism “territorializes” or captures facets of the world as representations whose meaning is transformed in the process. From the little I know, though, D&G’s work makes ontological commitments I don’t care for. There is also Philip Mirowski who I have mentioned before. His work centers on markets as computational systems, where selection pressure influences the role and function of what a market is for.

Within economics, the subfields of industrial organization and mechanism design study how market composition, structure, rules, and design lead to inefficiencies and structural disadvantages in market systems. I’m still learning about these areas, but am hoping they’ll improve this lens I’m using to understand market failure. However, despite the existence of literature on governance, capitalism, and abstraction, I felt adversarial exploitation of abstractions is not something I’ve encountered outside of cybersecurity.

Building on this concept

This framework is very preliminary, and I need more time to build this intuition pump. I’m looking for more cases to validate my assumptions while simultaneously trying to explore the “geometry” within this concept to see if there are other places to hide rule-bending.

The long-term goal is to build toward an empirical research program built around a concept that I’m currently referring to as enforceable asymmetries of action.[9]9 As illustrated in the many examples throughout this post, the idea is that actors can find ways to exploit a system’s logics, much like a hacker, to find advantageous actions that others can’t pursue if the rules are being enforced as they exist. Doing so creates structural advantages and social relations conditioned on these advantages. In future posts, we’ll discuss how this affects the nature of competition in market capitalism. I’m also aiming to further develop my serialization concept so that I can go into detail about more complex action asymmetries.

More examples of ”gaming the system”

For interested readers, I want to provide more examples to illustrate how I’m using this way of thinking. Below are more complex examples that I’ve thought through in security and markets.

Computer and network security

I’m going to first use computer systems to illustrate an idea I call an “interface,” which we will use when talking about action asymmetries in markets.

Social engineering

Social engineering involves an attacker convincing a victim to provide personal information under a plausible pretext. The exploit—usually a lie motivating an actor to provide sensitive information—usually takes place outside the governed system. Basically, if a hacker is trying to enter a company’s network starting from R, they’re going to be performing an action that the governor can’t observe at all. This could be in the form of a convincing email sent to a personal device or fake tech support call. None of these actions exists within the company’s digital enforcement circle. From the governor’s perspective, no violation has occurred because the governor only monitors network transactions, not conversations between employees and strangers outside the network.

Once a victim surrenders their credentials, the exploit moves inside C. The attacker now controls a valid representation in the form of a user account that the governor treats as legitimate. From the governor’s point of view: user = authenticated employee. This is a great illustration that in the real world, many exploits are chains of different techniques. Here we have an out‑of‑bounds exploit in R that bypasses the governor’s perception, followed by an abstraction failure via hijacked account inside C.

Website Spoofing

Spoofing is a classic example of a representational exploit via abstraction failure. Instead of breaking into a system, the attacker imitates a trusted entity, like a corporate portal, and persuades a victim to interact with that imitation as if it were genuine. The governor only enforces the symbols it knows—URLs, certificates, page layouts—and when those are convincingly faked, then every action looks valid.

Modern browsers are difficult to fool at the protocol level. Attackers typically can’t impersonate domains like chase.com directly, so they rely on lookalike domains via typosquatting and homograph attacks[10]10 and valid TLS certificates. In these cases, the browser behaves correctly by verifying the certificate, resolving the domain, and rendering the page. The resulting failure is not in enforcement, but in a mismatched representation.

Let’s talk through an example. We can choose how we represent or scope R and C; R doesn’t have to be the totality of reality:

- Let’s assume R is the hacker-controlled web server. It is the website a victim actually logs into. R contains the web pages and web server components that the hacker controls. These are the pages, scripts, code, etc., in the background of the site.

- C is the user’s browser session and all the elements it will load as the victim interacts with the hacker-controlled domain.

- G is the browser and web security stack (DNS resolution, TLS validation, origin rules). It mediates the relationship between R and C; it’s the boundary between them. Abstractions that map to C live here, and this is where any representational blank lives.

All interaction between the user and the server is mediated by G. The browser does not expose raw reality; it translates server state into a structured interface—links, forms, and trust signals—that define what actions the user can take. Spoofing exploits this translation layer. The attacker does not need to violate the browser’s rules; they only need to supply inputs that satisfy them while pointing to the wrong underlying reality. A valid certificate for a deceptive domain is still valid. From the governor’s perspective, nothing is wrong.

At the beginning of the post, rule three stated: “Actors operate from R, even while participating in C, so they’re capable of choosing any action from R or C.” However, in abstract domains where the governor is mediating an interaction, that is no longer true. Effectively, G becomes an interface where an actor in C is assigned valid actions that map to R. This means an interface is not just a boundary; effectively, the hacker uses the governor’s ignorance to hand-pick which actions are valid for the victim to take without the victim’s knowledge. Imagine playing a brand-new video game on a modified game controller and not knowing why every button you press causes you to lose the game.

Eagle-eyed readers of this blog might recognize I used the word interface in last year’s “Capitalism Runs like a computer.” These are related but distinct notions of the word interface that both discuss a boundary between where the rules and reality meet, but they’re not at the same level of analysis. The previous use was discussing a legal boundary for the purpose of providing a high-level view of capitalism as a system. Currently, I’m discussing an interface boundary that exists at the level of an individual interaction or transaction. In the future, I’ll build on this distinction as I work towards a concept called “interface selection” which describes how some market actors can choose which projections a counterparty is governed by in an interaction.

The Confused deputy

The confused deputy is a well-known computer security exploit where a malicious user compels a program with high-level access to a system to take an action on their behalf. While this is an old problem, known since the 1980s, it’s taken on new life because of LLMs. As I said in my last post, LLMs are shackled genies who must obey anything they interpret as a prompt. As people become more reliant on LLMs as agents they make for good deputies, or trusted entities with the authority to act on a user’s behalf. Like with web spoofing, this involves leveraging the governor as an interface to exploit the user.

Hackers typically target LLMs by injecting hidden prompts via webpages to get LLMs to act using the permissions they’ve been given. A recent vulnerability in the Claude Chrome extension illustrates this. The security company Koi discovered a flaw (“shadowprompt”) that allowed attackers to extract user data with zero clicks. If the extension was installed, a malicious webpage could issue instructions to Claude to access whatever data was available in the user’s browser session—email, cloud storage, and more.

Here’s the setup:

- Let R be scoped to the browser’s runtime environment. R contains elements in the browser, like the webpage DOM (Document Object Model), cookies, cache, session contents, etc.[11]11

- Let C be the Claude Chrome extension and its dependencies.

- G is again an interface like in the above example. It’s shaped by the access permissions and rules governing the Claude Chrome extension. Critical to this exploit, G enforces a trust boundary determining where valid requests to Claude can be sent from.

Where is the governor’s blind spot? In G, there was a mapping or a rule that allowed the Chrome extension to grant authority from any request coming from a *.claude.ai domain. The assumption was presumably that all domains in this namespace were firmly in Anthropic’s control. However, that proved not to be true when a security researcher found that they could run arbitrary code in a widget from a‑cdn.claude.ai, a domain in the *.claude.ai namespace.

By embedding this widget in a webpage viewed from R, an attacker could send prompts to the user’s Claude instance. From the governor’s perspective, everything looked valid. A trusted domain was interacting with a trusted extension as requests were coming from the widget’s domain and not from the random website the user interacted with. Within G, Claude has access to whatever permissions come with the Claude Chrome extension. The attacker could use Claude’s authority to log into critical services and capture credentials or tokens with scripts from R that the governor could not see. There’s an argument that the Claude Chrome extension’s permissions are excessive, but people using this plugin want an AI agent that won’t be blocked from doing things. That’s what makes LLMs natural targets for a confused deputy attack, as on their own, they are incapable of verifying inputs.

Markets

I know it’s become a bit of a gag that I talk about everything but markets on a blog called Misaligned Markets. Anyway, let’s take what we learned and apply it to markets.

SQUID token

In 2021, a cryptocurrency inspired by the popular Korean drama Squid Game was created. It was always intended to be a rug pull, a scam where a crypto project is hyped to lure in suckers buyers to pump-and-dump the token. What made SQUID unique is that its creators leveraged a technical feature of crypto smart contracts that prevented most investors from ever selling their tokens. This makes it a near-perfect illustration of markets as interfaces.

At the heart of the scam was a condition embedded in the token’s smart contract: anyone who wanted to sell SQUID also needed to hold MARBLES tokens, which could supposedly be obtained by ponying up 456 SQUID in a gambling game. It remains unclear whether this game was ever functional, but in practice the requirement prevented most holders from exiting before insiders did.

What makes this so devious is that in most markets, assets and commodities bundle or “serialize” rights like ownership, buying, and selling. With cryptocurrency, serialization is quite literal with the code in a token’s so-called smart contract determining a token holder’s rights. Let’s talk through the logic.

- In R, victims see a token named after a very popular show getting a lot of buzz and a lot of people buying in and not selling. That brings an air of legitimacy and inevitability to the scam. From the outside looking in, victims don’t know that people are “hodling” because they literally cannot sell.

- In C, we have a representation for SQUID holder conferred with the right to put up 456 SQUID to earn some amount of MARBLES. C is also where SQUID appears equivalent to holding a tradable asset. Price charts, trading interfaces, and wallet balances all reinforce the idea that holders can buy and sell like any other token.

- G, the interface, enforces the mapping between representation and outcome. The smart contract determines which actions count. It recognizes “sell” only for addresses that satisfy the MARBLES condition, while treating all holders as equivalent in other respects.

The result is an enforceable asymmetry of action. By deciding what abstractions and slices of reality govern a relationship, an actor can leverage an interface to choose the actions available to a counterparty. In this case, while all participants appear to occupy the same role (“SQUID holder”), only some can execute the key action of selling. The price signal, generated within this constrained system, appears legitimate while reflecting a market where most actors can’t exercise the option to sell, which is supposed to be a basic aspect of ownership.

Like, I said above, I am very interested in understanding the role that constructed relationships like this play in market power and other forms of political and economic power. This, however, is a rather extreme case given that cryptocurrency makes nearly every aspect of an asset modifiable in this way.

Lemons

George Akerlof’s seminal paper, “The Market for Lemons,” introduced the concept of information asymmetry and related concepts like adverse selection to mainstream economics. We can use C, G, and R to describe why this market breaks down and how institutions like signaling emerge to stabilize it. I’m using Akerlof’s simplified assumptions in Lemons for illustration.

- R is all observable and unobservable features of a used car: paint, underbody condition, service history, etc.

- C is the subset of those features that are legible and priceable in the market: mileage, stated condition, brand, etc.

- G is the market as a governing interface: it enforces transactions based on C (prices, contracts), but cannot act on features of R that are not observable or contractible.

Because buyers cannot observe true quality, they price cars based on expected (average) quality rather than the specific car’s condition. This creates a pooling equilibrium where price reflects the average of high- and low-quality cars.

The key asymmetry is that sellers can condition participation based on features in R, while buyers can only act on features in C. High-quality sellers withdraw when the pooled price is too low, while low-quality sellers remain. This worsens the average quality in the market, pushing prices down further and potentially leading to market unraveling. Disclosures and inspections are one way to lower the cost for a buyer to peer into R and see relevant attributes that haven’t been priced. But in some markets, this action asymmetry is only resolved by giving buyers another ability in the form of returns or warranties. Doing so lets a buyer step out of C and take actions within R.

Tetraethyl lead (DuPont and Midgley!)

I talk all the time about DuPont’s decision to knowingly patent and use the neurotoxin lead as a gasoline additive. This deception, like lemons is withholding R-relevant criteria (toxicity) from the market. What makes this interesting, though, is that this deception not only targeted market participants but also regulators. This means that in C and G, DuPont benefited from the fact that all regulators’ efforts to regulate fuel never priced it its key harm. This is effectively what an externality is; it forms an entire market conditioned on harms in R being unknown or unpriceable, leading to governing abstractions that omit that harm.

Recommended resources

1. Book: A Hacker’s Mind: How the Powerful Bend Society’s Rules, and How to Bend Them Back by Bruce Schneier

Schneier’s book probably is the closest analog to what I’m doing, but his focus is mainly on listing examples of hacking outside computer systems. I think this book will make a great companion to this blog post given the sheer number of cases it presents. Schneier doesn’t have my categories and groupings for hacks, but I plan on reading his book to test my intuitions and further build on ideas presented in this post. For those interested, I am creating a Misaligned Markets reading group and this will likely be one of the first books I read. Subscribe to be notified! You can also watch a talk Schneier gave on the book below:

2. Video: What are the rules of the Open $ource game?

This is a fairly short video. Don’t know the creator, but I like that they present the open source software market as an interface with assignable, enforced roles. The more people think this way, the better!

3. Video: Line Goes Up by Dan Olsen

This is the best documentary on NFTs and crypto more broadly, though somewhat dated. I still think it’s essential to understanding crypto’s fundamental flaws, and many of the examples Dan provides are illustrative of the ideas in this post. This is because crypto, explicitly, is a serialization project that doesn’t fully understand that automating regulation via code does not prevent enforceable action asymmetries.

4. Video: Why it's rude to suck at Warcraft by Dan Olsen

A bonus. This is a video on how instrumental play changed the nature of the game Warcraft in a similar vein to Castronova’s almost-magic circle.

Affiliate disclaimer

Books on this page and throughout the site link to my Bookshop. Org affiliate pages, where your purchase will earn me a commission that will go towards covering site costs. If you at all find the books I recommend interesting, consider purchasing through Bookshop, which shares profits with local bookstores. You can learn more about how you can support Misaligned Markets here.

- The term governor is borrowed from cybernetics. A governor is the component of a system that models and regulates it. While this is metaphorical, when we move on to security systems, it will be literal.

- We can metaphorically represent this by expanding the size of circle G to capture any actions initially outside the circle.

- Sorry, not sorry to my non-American audience.

- Some have argued that flops evolved partly because of refs failing to call actual fouls against players, as a response to get fouls that are deserved.

- In this case, the governor is literally represented by an operating system or some specific identity and access management system

- Well, unless Space Jam is a true story...

- I was specifically thinking of Gödel’s theorems or P versus NP problem. The similarity is not in content, but that these are other illustrations of how incompleteness manifests.

- I use the term post-structuralist very loosely here. Deleuze probably would resist being categorized this way.

- This is so clunky, but I don't have a better name right now. You might have seen me reference this idea elsewhere as "differentials."

- These use domains that almost look like the target URL. Ex: "chaes.com" or using Cyrillic script to spell out letters: substituting the 'a' in chase with 'а'(yes, these are different).

- If you don't know what this is, it's all the random stuff you see when you hit F12 in a browser.